Difference between revisions of "Data Validation"

(→Overview) |

|||

| Line 2: | Line 2: | ||

= Overview = | = Overview = | ||

| − | <p><strong>Data Validation</strong> checks principally whether a dataset conforms to the [[Data Structure Definition]] and complies with any specified [[Content Constraint|Content Constraints]]</p> | + | <p><strong>Data Validation</strong> checks principally whether a dataset conforms to the [[Data Structure Definition]] and complies with any specified [[Content Constraint|Content Constraints]].</p> |

<p>Data Validation is split into 3 high level validation process:</p> | <p>Data Validation is split into 3 high level validation process:</p> | ||

Revision as of 09:50, 24 February 2021

Contents

Overview

Data Validation checks principally whether a dataset conforms to the Data Structure Definition and complies with any specified Content Constraints.

Data Validation is split into 3 high level validation process:

- Syntax Validation - is the syntax of the dataset correct

- Duplicates - format agnostic process of rolling up duplicate series and obs

- Syntax Agnostic Validation - does the dataset contain the correct content

Data Validation can either be performed via the web User Interface of the Fusion Registry, or by POSTing data directly to the Fusion Registries' data validation web service.

Syntax Validation

Syntax Validation refers to validaiton of the reported dataset in terms of the file syntax. If the dataset is in SDMX-ML then this will ensure the XML is formatted correctly, and the XML Elements and XML Attributes are as expected. If the dataset is in Excel Format (propriatory to the Fusion Registry) then these checks will ensure the data complies with the expected Excel format.

Duplicates Validation

Part of the validation process is the consolidation of a dataset. Consolidation refers to ensuring any duplicate series are 'rolled up' into a single series. This process is important for data formats such as SDMX-EDI, where the series and observation attributes are reported at the end of a dataset, after all the observation values have been reported.

Example: Input Dataset Unconsolidated

| Frequency | Reference Area | Indicator | Time | Observation Value | Observation Note |

|---|---|---|---|---|---|

| A | UK | IND_1 | 2009 | 12.2 | - |

| A | UK | IND_1 | 2010 | 13.2 | - |

| A | UK | IND_1 | 2009 | - | A Note |

After Consolidation:

| Frequency | Reference Area | Indicator | Time | Observation Value | Observation Note |

|---|---|---|---|---|---|

| A | UK | IND_1 | 2009 | 12.2 | A Note |

| A | UK | IND_1 | 2010 | 13.2 | - |

The above consolidation process does not report the duplicate as an error, as the duplicate is not reporting contradictory information, it is supplying extra information. If the dataset were to contain two series with contradictory observation values, or attributes, then this would be reported as a duplication error

Example: Duplicate error for the observation value reported for 2009

| Frequency | Reference Area | Indicator | Time | Observation Value | Observation Note |

|---|---|---|---|---|---|

| A | UK | IND_1 | 2009 | 12.2 | - |

| A | UK | IND_1 | 2010 | 13.2 | - |

| A | UK | IND_1 | 2009 | 12.3 | A Note |

Syntax Agnostic Validation

Syntax Agnostic Validation is where the majority of the data validation process happens. Like the name suggests, the validation is syntax agnostic, and therefore the same validation rules and processes are applied to all datasets, regardless of the format the data was uploaded in.

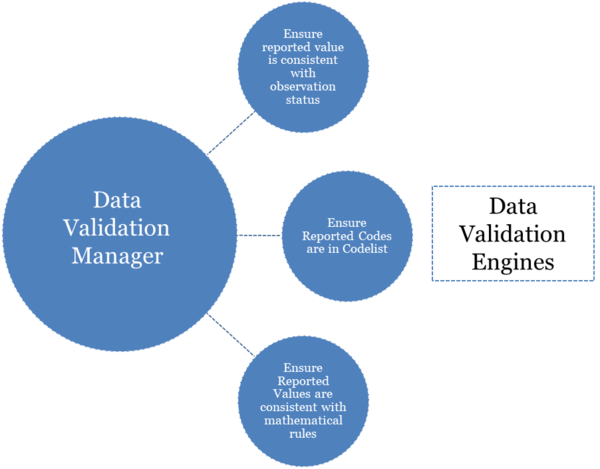

This validation process makes use of a single Validation Manager and multiple Validation Engines. The validation manager walks the contents of the dataset (Series and Observations) in a streaming fasion, and as each new Series or Observation is read in, it asks the same question to each registered Validation Engine - the question is "is this valid?".

An conceptual example of the Validation Manager delegating validation questions to each Validation Engine in turn

The purpose of a Validation Engine is to perform ONE type of validation, this allows configuration of each validation engine as a seperate entity, and new validation engines can be easily added to the product if there is a new type of validation rule to implement. Validation Engines can be switched off, or have a different level of error reporting set, validation engines can also have a error limit set, so that a single engine can be decommisioned from validating a particular dataset if it is reporting too many errors. In the validation report that is produced, the errors are grouped per validation engine.

The following table shows each validation engine and its purpose

| Validation Type | Validation Description |

|---|---|

| Structure | Ensures the Dataset reports all Dimensions and does not include any additional Dimensions or Attributes |

| Representation | Ensures the reported values for Dimensions, Attributes, and Observation values comply with the DSD |

| Mandatory Attributes | Ensures all Attributes, as defined in the Data Structure Definition (DSD), are reported if they are marked as Mandatory |

| Constraints | Ensures the reported values have not been disallowed due to Content Constraint definitions |

| Mathematical Rules | Performs any mathematical calculations, defined in Validation Schemes, to ensure compliance |

| Frequency Match | Ensure the reported Frequency code matches the reported time period Example: FREQ=A will expect time periods in format YYYY |

| Obs Status Match | Ensure observation values are in keeping with the Observation Status Example: Missing Value, does not expect a value to be reported |

| Missing Time Period | Ensures the Series has no holes in the reported time periods |

Validation With Transformation

Fusion Registry supports a validation process, which combines both data validation with data transformation. The output can just be the valid dataset (with invalid observations removed) or both the valid dataset, and invalid dataset.

See the Data Validation Web Service for details on how to achieve this.

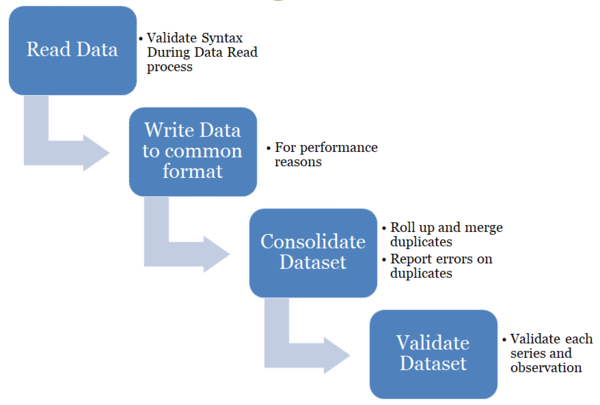

Performance

The data format has some impact on performance time, as the time taken to perform the initial data read and syntax specific validation rules are format specific. After the initial checks are performed, an intermediary data format is used to perform consolidation and syntax agnositc checks. Therefore the performance of the data consolidation stage and syntax agnostic data validaiton is the same regardless of import format.

Considerations to take to optimise performance is

- To reduce network traffic, upload the data file as a Zip

- In the case the dataset is coming from a URL support gzip response

- Use a fast hard drive to optimise I/O as temporary files will be used in the case of validating large datasets

- Performance is dependant on CPU speed

When the server recieves a zip file, there is some overhead in unzipping the file, but this overhead is very small compare to the performance gains in network transfer times.

Tests have been carried out on a dataset with the following properties

| Series | 216,338 |

| Observations | 15,470,893 |

| Dimensions | 15 |

| Datset Attributes | 3 |

| Observation Attributes | 2 |

The following table shows the file size, and validation duration for each data format

| Data Format | File Size Uncompressed |

File Size Zipped |

Syntax Validation (seconds) |

Data Consolidation (seconds) |

Validate Dataset (seconds) |

Total Duration (seconds) |

|---|---|---|---|---|---|---|

| SDMX Generic 2.1 | 3.6Gb | 94Mb | 165 | 37 | 57 | 312 |

| SDMX Compact 2.1 | 1.1Gb | 59Mb | 84 | 37 | 57 | 251 |

| SDMX JSON | 362Mb | 41Mb | 65 | 36 | 58 | 224 |

| SDMX CSV | 1.3Gb | 80Mb | 71 | 36 | 58 | 240 |

| SDMX EDI | 153Mb | 71Mb | 68 | 36 | 58 | 230 |

Note 1: All times are rounded to the nearest second

Note 2: Total duration includes other processes which are not included in this table

Web Service

As an aternative to the Fusion Registry web User Interface data validation web service can be used to validate data from external tools or processes.

Security

Data Validation is by default a public service and as such a user can perform data validation with no authentication required. It is possible to change the security level in the Registry to either:

- Require that a user is authenticated before they can perform ANY data validation

- Require that a user is authenticated before they can perform data validation on a dataset obtained from a URL